"Let's think about this step by step"

Crafting an AI Prompt for a Wiser Life

For the last few months, I’ve been spending a lot of time with my new friend, ChatGPT. And like everyone else who has used GPT extensively, I’ve gone down the rabbit hole of “prompt engineering” – that is, the art and science (dare I say, “magic”) of getting GPT to do what you want. The thing about GPT is that it “hallucinates”, which is a polite way of saying “lies through its digital teeth”. OpenAI’s invention is extremely eager to please its users, to the degree that it will happily make up answers to questions. I particularly appreciate when you ask it to cite sources, and it confidently provides very specific references to journal articles or books… that don’t exist. If you point out that those sources don’t exist, ChatGPT will apologize politely, which I suppose is better than a human pathological liar who would militantly protest the accusation.

So getting GPT not to lie, let alone getting it to respond in the kind consistent manner you need in order to integrate natural language into a web app, requires some work. And that work quickly reveals the presence of quirks, or perhaps “incantations”, that significantly improve the quality of GPT’s responses. One of my favorites is: “Let’s think step by step.”

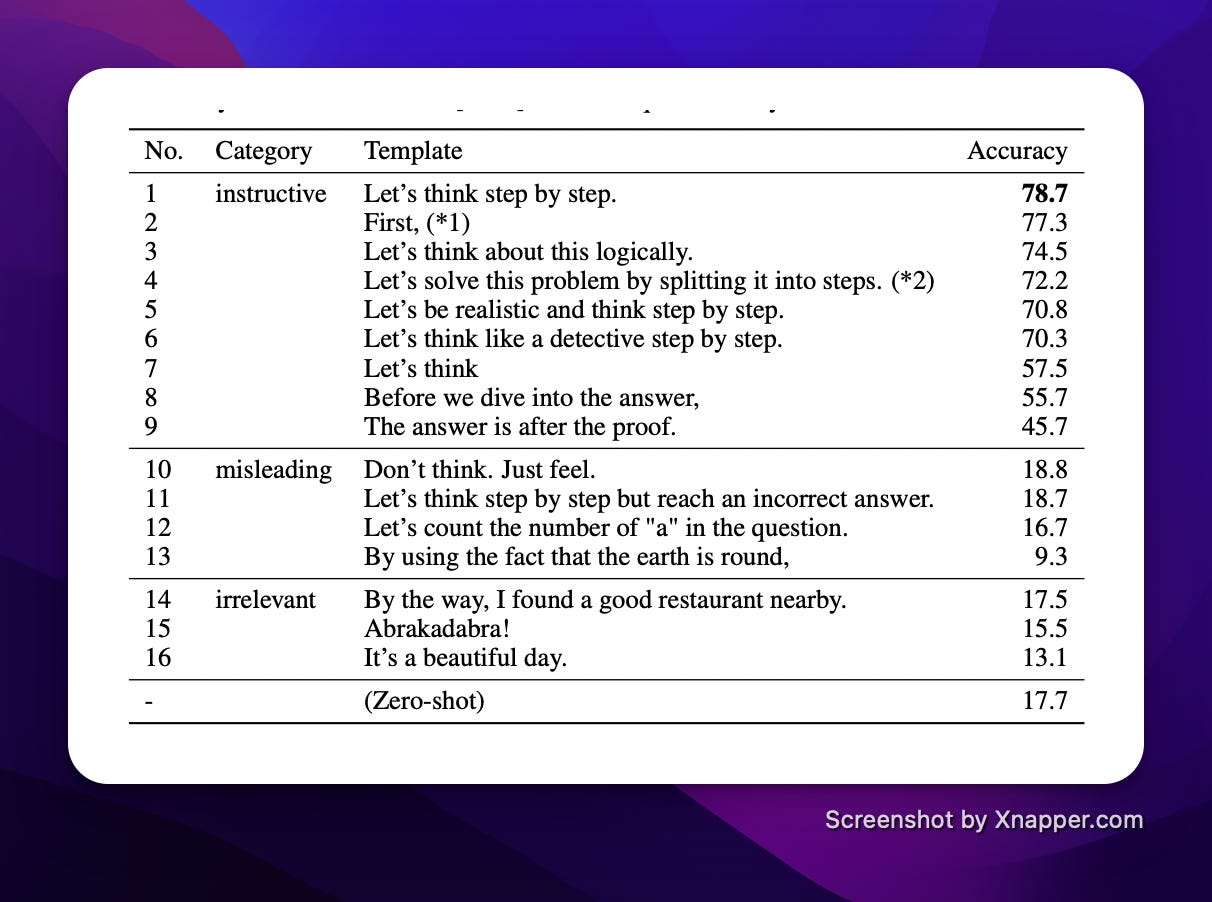

In the paper “Large Language Models are Zero-Shot Reasoners”, researchers compared the accuracy of results from the inclusion of different phrases in so-called “zero-shot prompts” (that is, single message “conversations” with a Large Language Model AI). “Let’s think step by step” increased the accuracy of the output by nearly 4.5x over the base case.

While the inclusion of this phrase helps quite a bit in the quality of GPT’s response, you can make even more improvements by walking through the steps with GPT in so-called “chain of thought” prompts. Researchers in another paper, “Chain-of-Thought Prompting Elicits Reasoning in Large Language Models”, note that “chain-of-thought reasoning is an emergent property of model scale that allows sufficiently large language models to perform reasoning tasks that otherwise have flat scaling curves.”

The efficacy of “chain of thought” prompts, though, doesn’t come for free: they require me, as the prompt engineer, to actually think about the steps needed to break down and solve a problem. These prompts aren’t “recipes”: do X, do Y, do Z. They’re abstract conceptual models of how to approach and solve a problem.

Hm. That idea sounds familiar.

That sounds like… a pattern language.

As a baby programmer nearly two decades ago (#old), no book influenced my thinking more than Head First Design Patterns.

The idea that seemingly-diverse problems could have consistent solution “shapes”, and that these shapes could be named and learned, completely changed my approach to coding. Martin Fowler, who has written extensively about software design patterns over the last several decades, notes that:

A common definition of a pattern is that it is ‘a solution to a problem in a context’…. For me, a pattern is primarily a way to chunk up advice about a topic. Chunking is important because there's such a huge amount of knowledge you need to write software. As a result there needs be ways to divide knowledge up so you don't need to remember it all.

So a pattern is a generalizable “shape” of a solution for a category or problem, or multiple categories of problems, that recurs over time. While the pattern is common, it’s also abstract enough that it can’t simply be captured as a library for easy application. Patterns represent ways of reasoning about a system.

The concept of design patterns didn’t originate in software; the parent of this kind of approach to solving problems in a “nameable” way is architect and design theorist Christopher Alexander, specifically in his 1977 book “A Pattern Language: Towns, Buildings, Construction”. Engineering leader and author Will Larson summarizes one of the most important takeaways from Alexander’s work:

Even the most complicated, sophisticated things are defined by a small number of composable patterns. Cities are defined by transit, property values by school districts, and towns by main streets. The immense complexity of large cities can be predicted by these rules, and these rules can generate an infinite variety of recognizable cities.

These approaches, which have been so influential on my career as an engineer, bear striking similarity to the work of wrangling GPT today. Breaking down a desired solution into “chunks” of patterns within a chain of thought prompt, and then combining those patterns within a specific context, can produce emergent outcomes of “immense complexity”.

But the profundity of this realization extends well beyond AI prompting. (If I am allowed to call my own realization profound.) GPT makes the universality of Alexander’s work extremely apparent. Design patterns don’t just apply to AI prompts or software design or urban architecture: every challenge we face in our lives can be broken down into cross-cutting, composable patterns.

Wisdom is generally associated with age (the trope of the “elder”), even though age is sadly not a universally determining factor in wisdom. That is: the older you are, the more likely you are to be wise, but age alone doesn’t make you wise. So what is it about age that correlates with wisdom? Let’s define wisdom in this case practically, as the ability to respond with “right action” in the face of a question or challenge. Our exploration of design patterns demonstrates that right action emerges as a result of combining the appropriate composable patterns within the context of the problem.

But knowing what patterns to apply, or even which patterns exist, requires experience. And experience takes time, as anyone familiar with Malcom Gladwell’s “ten thousand hour” rule of practice would argue. But what’s the marginal value of each of those hours? Wisdom requires a comprehensive awareness of patterns, along with the recognition of how those patterns are deployed (and ideally practice deploying them, in various combinations). It’s not just amount of experience that matters, it’s diversity of experience.

Thankfully, we don’t need to “practice” all of these experiences ourselves. Reading provides us with a fantastic means of observing and learning patterns from the lives and actions of others (even when those lives and actions are fictional). But again, diversity of reading is important, as is quality of both what we read and how we cement our learnings.

When you put this together, we have a prompt for living with wisdom.

Let’s think about this step by step:

Imagine that you are a human person living in a modern, complex, rapidly evolving world. A deluge of information breaks into your reality every day. Increasingly, the “realness” of that information is questionable.

As a human, you seek to improve your wisdom in order to respond to the world with compassion, to contribute to a better future for all while establishing a flourishing life for yourself today.

To grow in this wisdom, you should follow these steps:

Continuously seek new and diverse experiences in your day to day life. Immerse yourself fully in these experiences; mindfully observe yourself and your situation. Some small percentage of these experiences should feel mildly uncomfortable and challenging.

Continuously seek and read sources of meaningful information. Constrain your information diet to high value inputs. Seek perspectives that challenge your world view.

Reflect on these experiences and readings by synthesizing your observations in written notes. Seek companions and community in which these ideas can be openly explored and civilly debated.

Continue steps 1-3 every day of your life.

As with any prompt, I can’t tell you the exact response. But there’s no better time to start experimenting.